The Case for Human-in-the-Loop Compliance

AI can draft answers to a security questionnaire in seconds, but that doesn't mean it should submit one. In compliance and vendor security reviews, speed without control is a huge liability. Buyers are not evaluating how quickly you can respond, they are evaluating whether they can trust what you say.

That is why human-in-the-loop AI is not a compromise, it's the only responsible model.

Compliance is about accountability

Security questionnaires are not creative writing exercises, they are formal representations of your security posture. Someone in your organization owns those answers. A founder, a CTO, or a head of security ultimately has to stand behind every statement. "The AI wrote it" is not a defensible position when a contract references your responses.

Human review preserves accountability. AI can draft, suggest, and organize but someone should review and approve the answers because ultimately a human will have to take responsibility.

Hallucinations are not minor errors

In marketing copy, an AI mistake is embarrassing. In compliance, it can be contractual exposure.

If a response claims quarterly penetration testing and you conduct it annually, that is not a typo. It's a misrepresentation. Even subtle wording changes around encryption, logging, or access controls can materially alter meaning.

Human oversight is the control that prevents drift. Without it, "speed" just becomes unmitigated risk.

Context matters more than pattern matching

Security questionnaires often look similar, but the nuance is where the risk lives. One buyer may want a high-level explanation. Another expects technical depth and named tools. A third requires supporting documentation attached to every answer.

AI can recognize patterns, but humans are better able to understand context and intent. The combination produces accurate, defensible responses.

Governance is the real scaling problem

The first questionnaire is tedious. The fifth exposes inconsistency.

Without structure, teams accumulate slightly different versions of the same answer. Over time, those variations create contradictions across customers and challenges in operationalizing your security program.

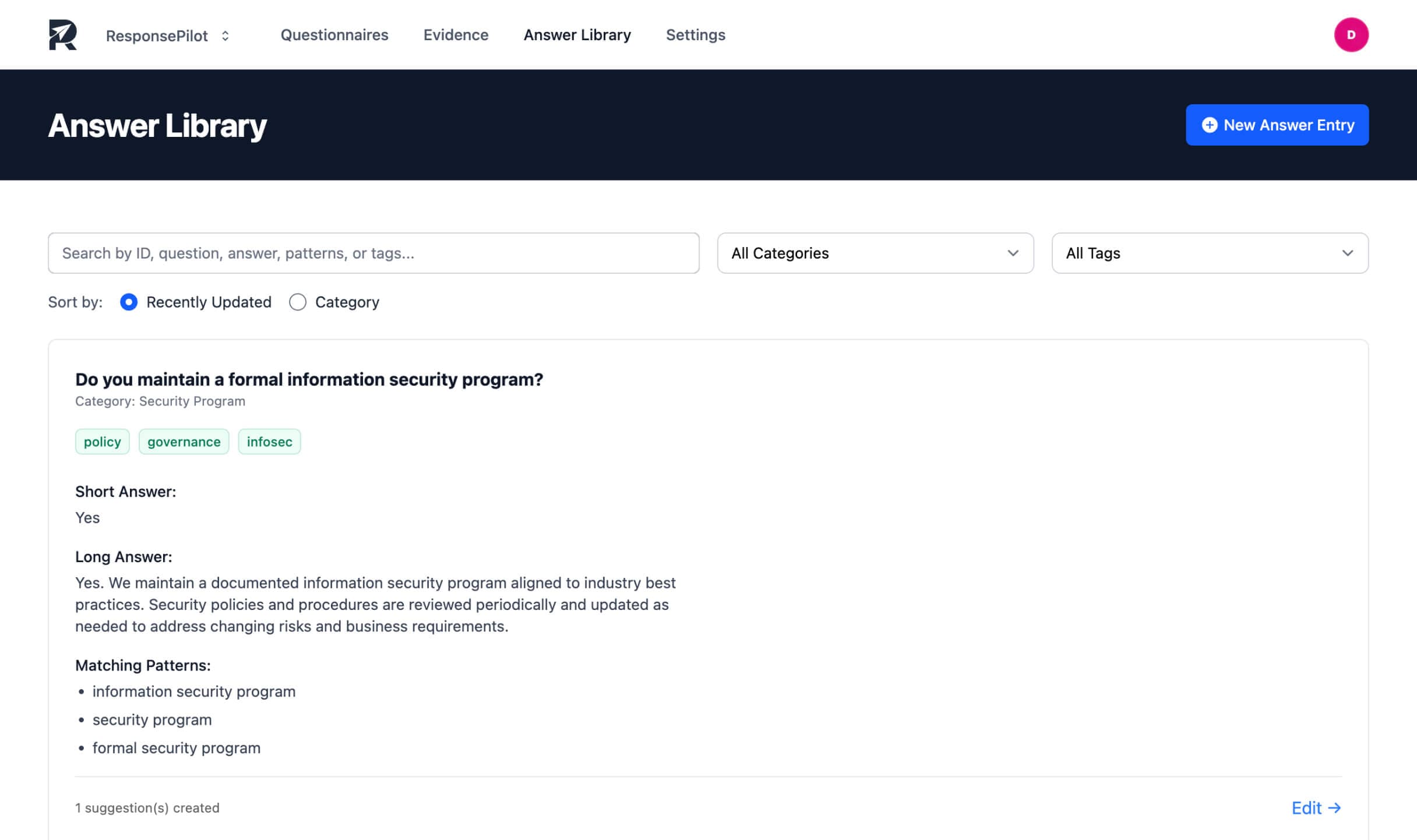

Human-in-the-loop systems create governance. Approved answers become assets, changes are tracked, and accountability is clear.

Fully autonomous compliance is a false promise

"Upload and auto-send" sounds nice and efficient, but it's rarely responsible. Security reviews are trust exercises. Buyers are looking for signs of rigor, not automation shortcuts.

A system that drafts without requiring approval optimizes for speed alone. A system that requires human validation optimizes for trust and durability.

The right model is controlled acceleration

AI should reduce repetition, but it should not remove judgment. The ideal workflow is straightforward: Draft responses quickly, attach evidence, flag uncertainty, and require approval before export.

That's not slower in practice. It's faster over time because it prevents rework and reduces risk.

Speed and trust can coexist

Small teams feel pressure to move quickly, but enterprise buyers demand accuracy.

Human-in-the-loop AI bridges that tension. It accelerates drafting while preserving oversight and it enables teams to respond in hours without sacrificing credibility.

Compliance is too important to automate blindly. It's also too repetitive not to automate intelligently.